This is a lengthy post about how Azure sucks and is the worst cloud service, bar none. For the faint of heart, there’s a summary at the end. The theme is horror ?

The (Blair Witch) Project

Six months ago, I was burnt out and dissatisfied. I quit my job to take some time off. On one hand, I wanted to pivot towards product (and away from engineering), and on the other, I just really wanted to build something that I wanted to build. A few weeks into this pseudo-sabbatical, I had an interesting idea. I love watching movies and TV shows and I especially love reading little fun trivia, tidbits, or interesting connections that fans often post on reddit or the (now defunct) IMDb forums.

Enter spoiled.tv: a web app that splits movies and TV shows in one-second intervals, uploads the screenshots, and lets fans add trivia, spoilers, cool stuff you may have missed, etc. to the timeline. I like to say it’s a “Rap Genius for movies.”

I also decided, in my fervor, that I would learn and use React to build the front-end: worst case scenario, I learn a new technology and put it on my resume. And while I’m at it, why not learn how to deploy on Azure. I have a few friends that work for Microsoft and always tout Azure as the “AWS killer” — might as well give it a try!

So, when all was said and done, I had the trifecta of Web 2.0 requirements:

| (app) | React front-end, Node.js back-end | Azure App Service (Linux) |

| (database) | MongoDB | Azure Cosmos DB |

| (storage) | The “cloud” | Azure Storage |

Tales from the Crypt: Deploying on Azure

You’d think that after Heroku, Firebase, and Modulus nailed the deployment experience, Microsoft would understand how to handle deploying to the cloud. Ideally, it should go something like this.

[bash]

$ azure login david@example.com password123

$ azure new my-app

$ cd my-app

$ azure deploy my-app

[/bash]

Instead, to deploy a Node.js app to Azure, you have to follow this “quickstart” guide — which isn’t quick nor is it a good starting point. I know what you’re thinking: “oh it’s not that bad” — and honestly, it didn’t seem bad at first. But then I wanted to integrate with Microsoft’s Visual Studio CI.

Visual Studio splits up the CI process into two phases: building and releasing. Building installs Node, all dependencies, zips everything up, runs tests, and prepares the artifact for deployment.

And the script is pretty simple, too (just some boilerplate Meteor/Node stuff):

[bash]

#!/bin/bash

curl https://install.meteor.com/ | sh

npm install

meteor build –directory builds –allow-superuser

cd builds/bundle/programs/server && npm install && npm install babel-runtime && npm install bcrypt

[/bash]

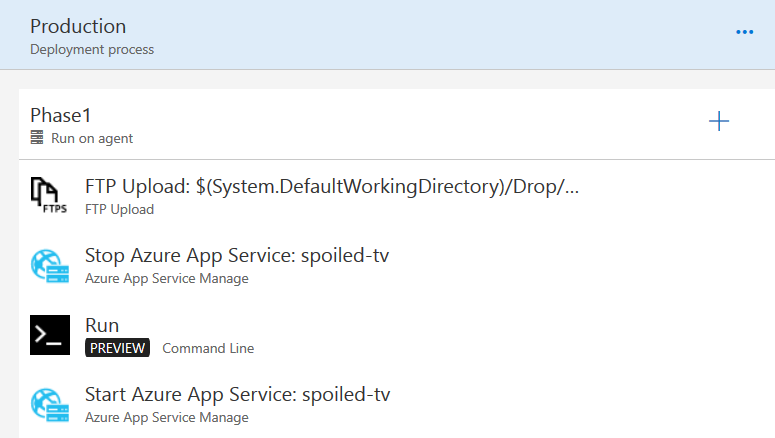

But then we get to releasing and all hell breaks loose. The process looks deceptively simple:

The idea here is to upload the bundle via FTP to the app server and replace the old version of the app. Here’s what that “Run” script looks like:

[bash]

#!/bin/bash

echo “Waiting 60 seconds to ensure staging is off”

sleep 60

echo “Continuing…”

git clone https://github.com/dvx/meteor-kudu-init.git

cd meteor-kudu-init

rm -rf .git

git init

git config –global user.email “deploy@spoiled.tv”

git config –global user.name “spoiled.tv deployment process”

git remote add azure https://username:password@staging-url.azurewebsites.net/spoiled-tv.git

git add .

git commit -m “deploy”

git push -f azure master

[/bash]

What I’ve learned after many (many) failed builds is that Azure sometimes doesn’t shut down services when it says it does. About 20% of the time, my builds would fail because the server wouldn’t be shut down (and the Node process wouldn’t be stopped, which would throw all kinds of permission/in use errors). So, I had to force a 60 second wait before actually triggering the release. Insane, I know, but we’re just getting started.

Then, we need to use a custom Kudu script (that’s called deploy.sh) and lives in the root directory) to actually replace what’s in wwwroot otherwise the zip file we upload does nothing. Did I mention this isn’t documented anywhere? All you can find is confused people on Stack Overflow or on Azure’s official forums. I was able to (kind of) figure out what was going on by piecing together example Azure scripts I found on GitHub.

The idea is to combine this Kudu init file repository with the actual (zipped) bundle and then the Kudu script gets executed; the script should extract the bundle to the proper place, and (hopefully) everything will run when the server comes back online. If you’re curious what my release.sh file looks like, you can check it out here. We also need a custom package.json file which tells the Azure Docker container to run the correct main script. You can see it here.

For an extra dose of crazy, you’ll notice that in deploy.sh, I can’t use mv (because we’re running as some weird user; we also can’t run any commands as a superuser). So we need this workaround:

[bash]

# We can’t use mv because we’re the deployment/repository user, so copy (and remove) instead

print “Moving bundle to {DEPLOYMENT_TARGET}”

cp -r –no-preserve=mode,ownership “bundle” “${DEPLOYMENT_TARGET}” && rm -rf “bundle”

[/bash]

Let me reiterate: none of this is documented. It literally took me weeks to figure out all these intricacies. But hey, at least we’re live!

The Twilight Zone: CosmosDB isn’t MongoDB

My first instinct was to use Azure’s CosmosDB as a drop-in replacement for MongoDB because it was a fully managed solution and Microsoft boldly claimed that:

Azure Cosmos DB databases can be used as the data store for apps written for MongoDB.

Too good to be true? Yep. Meteor (my back-end) needs to use oplog tailing. This is a feature that helps with real-time reactivity and performance/caching enhancements. As it turns out, CosmosDB does not support oplog tailing (making it incompatible with some MongoDB apps), but doesn’t document it anywhere.

At this point, I should’ve probably dropped Azure, but instead, I soldiered on. So I set up a few VMs and put MongoDB on them. This part was admittedly pretty easy. All I had to do is click the magic button. After a quick wizard, I had two VMs running in an availability group with MongoDB on them. Great!, I thought.

Black Mirror: Everything is Broken

Everything seemed to work. The site had been running for about two months when I checked on it last Tuesday and it was randomly down. Weird. Let’s look at the logs. They were filled with:

[bash]

Got exception while polling query: MongoError: no connection available

Got exception while polling query: MongoError: no connection available

Got exception while polling query: MongoError: no connection available

Got exception while polling query: MongoError: no connection available

Got exception while polling query: MongoError: no connection available

[/bash]

Whoa. What in the world is going on? Clearly my MongoDB instances must be down. but upon further investigation, they weren’t. I tried restarting the app service, but still no dice. Here’s what I saw:

[bash]

Starting OpenBSD Secure Shell server: sshd.

Generating app startup command

Found scripts.start in package.json

Running npm start

npm info it worked if it ends with ok

npm info using npm@5.0.0

npm info using node@v8.0.0

npm info lifecycle meteor-kudu-init@1.0.0~prestart: meteor-kudu-init@1.0.0

npm info lifecycle meteor-kudu-init@1.0.0~start: meteor-kudu-init@1.0.0

> meteor-kudu-init@1.0.0 start /home/site/wwwroot

> node bundle/main

/home/site/wwwroot/bundle/programs/server/node_modules/fibers/future.js:313

throw(ex);

^

MongoError: failed to connect to server [10.0.1.4:27017] on first connect [MongoError: connection 3 to 10.0.1.4:27017 timed out]

at Pool.

at emitOne (events.js:115:13)

at Pool.emit (events.js:210:7)

at Connection.

at Object.onceWrapper (events.js:312:19)

at emitTwo (events.js:125:13)

at Connection.emit (events.js:213:7)

at Socket.

at Object.onceWrapper (events.js:312:19)

at emitNone (events.js:105:13)

npm info lifecycle meteor-kudu-init@1.0.0~start: Failed to exec start script

npm ERR! code ELIFECYCLE

npm ERR! errno 1

npm ERR! meteor-kudu-init@1.0.0 start: node bundle/main

npm ERR! Exit status 1

npm ERR!

npm ERR! Failed at the meteor-kudu-init@1.0.0 start script.

npm ERR! This is probably not a problem with npm. There is likely additional logging output above.

npm WARN Local package.json exists, but node_modules missing, did you mean to install?

npm ERR! A complete log of this run can be found in:

npm ERR! /root/.npm/_logs/2018-02-23T00_08_21_678Z-debug.log

[/bash]

Why is my app trying to connect to an internal IP address (10.0.1.4:27017) when I clearly indicated otherwise in the settings? I was using the fully qualified domain name provided by Azure: it looked something like mongodb://username:password@my-mongodb-server.southcentralus.cloudapp.azure.com:27017. To add to the confusion, my app instance wasn’t even linked to a private network. I figured it must be a DNS setting somewhere. I found a DNS property (in the NIC settings) and changed it to Google’s DNS servers:

And it worked! Except that it stopped working the next day and, for whatever reason, the FQDN would always resolve to an internal IP and be unreachable after that. I even tried using the public IP address and it would still resolve to the internal IP. At this point, I just didn’t care. I just want the darn thing to be up. Guess which button I clicked, it rhymes with schmelete:

![]()

I exported my MongoDB data and moved it over to mLab. I’m moving the app to Heroku soon, but I’m going to stick with Azure Storage (for now) because I’m lazy and migrating would take forever. Bye Azure, you suck.

Post Mortem

I took one for the team, folks. I tried Azure and after a few hundred bucks spent, I can safely say I never want to use it again. Azure fails for many reasons, but here’s my laundry list:

- Poor or absent documentation

- Atrocious and cryptic deployment process

- Hidden fees, especially w.r.t. Visual Studio CI

- Confusing, non-responsive, and cramped UI

- APIs that break due to race conditions (especially when using the UI)

- Everything breaking constantly gives out a “we’re still in beta” vibe

- Awful CLI

- Did I mention terrible documentation?

Microsoft lives in a bubble. Most of their Azure customers will be large companies that sign year-long contracts for hundreds of thousands (if not millions) of dollars. I’m sure they don’t care about my poor experience, but from what I’ve seen, it’s not the only one. Prototyping a project? By golly, don’t use Azure. I was excited for a new player in a space that’s dominated by AWS, but it just wasn’t meant to be.